Recommendations for Live

In Live workflows Unified Origin is placed between one or more Live encoders and a Content Delivery Network (CDN), with a shield cache between Origin and CDN being recommended to ensure robustness and increase performance:

Player --> CDN --> Unified Origin <-- Live encoder

For VOD the use of a CDN is recommended, but for streaming Live at scale it is a must. Through efficient caching, dynamically packaged livestreams from Origin can be delivered to the largest of audiences. Typically, a minimum of 10 channels can be served from a single Origin. To achieve this you need to take the following into account:

Configuring Live encoders to output streams compliant with Live ingest specifications supported by Unified Origin

Configuring Origin to adjust output streams to use case, adding DRM if needed

Fine tuning Apache (on which Origin runs) to your environment and use case

Adding redundancy and failover to support 24x7 streaming

Setting up a shield cache to control egress

Configuring CDN to properly cache expiring live content

Integrating Digital Rights Management (DRM) providers, if needed

Integrating 3rd-party Dynamic Ad Insertion into the workflow, if needed

Also, please refer to Troubleshooting LIVE Streaming.

Must Fix: use of Smooth Streaming or CMAF ingest

A Live encoder needs to POST (or PUT) an fMP4 packaged livestream over HTTP to a publishing point on Unified Origin so that Origin can ingest the livestream and dynamically package and deliver it based on incoming requests from clients.

There are two specifications that define how a Live encoder should do this:

Microsoft Smooth Streaming ingest, as defined in Azure Media Services fragmented MP4 live ingest specification

CMAF ingest, as defined in Interface 1 of the DASH-IF Live Media Ingest specification

Smooth Streaming ingest is supported by a lot of encoders, but isn't actively developed anymore and can therefore not be considered future proof. CMAF ingest is its spiritual successor, as it builds on the foundation of Smooth ingest with Microsoft having contributed significantly to the development of this newer specification, alongside a wide variety of industry partners. CMAF ingest fully aligns with the CMAF specification, hence its name. For these reasons, CMAF ingest is the preferred choice, if your Live encoder supports it.

In addition to media and subtitles, Origin can also ingest Timed Metadata. For this, Interface 1 of the DASH-IF Live Media Ingest specification recommends the use of a separate track, where the Timed Metadata like SCTE 35 and ID3 can be carried DashEventMessageBoxes. The advantage of using a separate track for this purpose is that it allows for faster and more efficient processing (because not all media tracks have to scanned to determine whether they also carry Timed Metadata).

Note

Unified Origin may be able to ingest live DASH or fMP4 HLS content directly, given some additional configuration. This is not a recommended approach, but it may be an acceptable solution in certain circumstances, if the CMAF headers and fragments of the live DASH or fMP4 HLS content are formatted correctly. However, we strongly recommend to ask your encoder vendor to produce output that is compliant with one of the two aforementioned ingest protocols, as Origin's compatibility with these protocols is guaranteed.

Overall, the ingest should comply to to the requirements and recommendations detailed in the Content Preparation section of the Best Practices. In addition, the following three points are important:

Must Fix: use of UTC timestamps

For Live ingest, it is a requirement to configure the encoder and the server to use (wall-clock) time in UTC format, otherwise you might face timestamp issues when playing the live stream.

Without it the encoder most likely starts with time 0, which will make it

difficult to use options like Virtual subclips and will make

--restart_on_encoder_reconnect impossible. UTC timestamps are

crucial for Should Fix: add Origin and encoder redundancy (using ReaP) as well, as this requires that the segment

contents (including timing information) generated by each encoder to be

identical.

Live encoder should use UTC timing

Unified Origin accepts timestamps in either tfxd or tfdt box.

You can verify your configuration through a GET request to:

http(s)://example/path/<channel-name>.isml/archive

The end time attribute in each stream should be (close to) the actual current UTC time.

Unified Origin should use UTC timing

Not only should the encoder output the livestream with UTC timestamps, the server running Unified Origin should use UTC timing, too. To configure your server to use UTC timing, please run the following command and follow the instructions:

#!/bin/bash

sudo dpkg-reconfigure tzdata

Representation of UTC timing in DASH MPD

The MPD@availabilityStartTime indicates the zero point on the MPD timeline

of a dynamic MPD. When using Origin for Live it defaults to UNIX epoch.

In addition MPD@publishTime specifies the wall-clock time when Origin

generated the MPD (based on an incoming request).

To comply with the requirement described in paragraph 4.7.2 of version 4.0 of

the DASH-IF Interoperability Guidelines (IOP), we add the UTCTiming element

to the MPD when streaming Live (as of release 1.7.27):

<UTCTiming schemeIdUri="urn:mpeg:dash:utc:http-iso:2014"

value="https://time.akamai.com/?iso"/>

Note that Origin itself simply relies on the time of the server that it runs on

(as explained above) and does not synchronize its time with the

time.akamai.com server (although you may synchronize your Origin server's

time with Akamai's).

Representation of UTC timing in HLS playlists

When the Origin recognizes the use of UTC timestamps it adds the

EXT-X-PROGRAM-DATE-TIME tag to the HLS playlists it generates. A player can

then use it as a basis for its timeline presentation.

The date/time representation is ISO 8601 and always indicates the UTC timezone:

#EXT-X-PROGRAM-DATE-TIME:<YYYY-MM-DDThh:mm:ssZ>

Must Fix: Live encoder pushes stream to publishing point using HTTP POST

As specified in Interface 1 of the DASH-IF Live Media Ingest specification (and Azure Media Services fragmented MP4 live ingest specification), a Live encoder must push the live stream to a publishing point using HTTP POST (or PUT). It may do so by delivering each segment individually or through a long-running POST (using chunked transfer encoding).

It is recommended that an encoder sends an initial HTTP 1.1 handshake (Expect:

100-Continue header + empty body) before sending (chunked) payload.

A live encoder must push to a publishing point using the following URL syntax:

http(s)://example/path/<channel-name>.isml/Streams(<stream-identifier>)

Where the <stream-identifier> should be unique for each stream that is pushes (note that a live stream may consists of several streams, with each stream containing one or more tracks).

The main advantage to posting multiple (multiplexed) tracks in a single stream is that they are implicitly synchronized. i.e., either all bitrates are there for some interval or none are.

Track bit rate hack (for testing purposes only)

Track bit rate may be signaled (as a last resort hack) in the stream identifier, where a decimal number following a dash is interpreted as kilobits associated with each (first, second etc.) track found in the stream, for instance:

<channel-name>.isml/Streams(stream-3000k-128k)

This brittle setup is not recommended and is intended for testing purposes only. It should not be used in any production setup.

Must Fix: send End-Of-Stream (EOS) signal at end of stream

At the end of a Live streaming event the encoder must send an EOS signal for all of the livestream's tracks. This will properly signify the end of a Live broadcast and make the publishing point switch to a 'stopped' state. An EOS signal consists of an empty MfraBox with no embedded sample entries in the TfraBox and no MfroBox following, as specified by ISO/IEC-14496-12 (see 3.3.4.2 Stop Stream).

The empty MfraBox containing the 8 byte sequence looks like the following:

00 00 00 08 6d 66 72 61

Note

The above has been extended by the following section from paragraph 6.1 from Interface 1 of the DASH-IF Live Media Ingest specification:

"At the end of the session, the source may send an empty mfra (deprecated) box or a segment with the lmsg brand. Then, the ingest source can follow up by closing the TCP connection using a TCP FIN packet."

Stop your encoder and query the REST API of the Live Publishing point to check its state, which should be 'stopped' (see Retrieving publishing point information for more information).

Attention

In case Should Fix: add Origin and encoder redundancy (using ReaP) has been followed each encoder should not send the EOS signal when stopping as that signals an end-of-stream. The origin then closes the archive on disk and from that point on it is closed. When you want to switch off a second encoder in a HA setup then 'pull the plug', and do not send EOS, so the stream is not closed.

Must Fix: Set up a publishing point (including configuration)

To create publishing points some sort of script should be created. This needs to take into account the following:

An unused publishing points is a directory that contains a Live server manifest (

.isml), which should be create with 'mp4split'The publishing point is hosted by Apache, in a location that the encoder can successfully access through HTTP POST (or PUT)

Apache should be the owner of the publishing point and have read and write access to it (the directory itself, as well as the Live server manifest)

The publishing point's directory and the Live server manifest inside should have the same name (e.g.,

channel1andchannel1.isml)

The script that creates the publishing point should rely on mp4split to create the Live server manifest. This Live server manifest contains the configuration of the publishing point.

Certain options for Origin should be configured explicitly instead of relying on the preset defaults, because many options have evolved over time while their defaults have often remained the same for backwards compatibility reasons. Some requirements and recommendations are listed below.

Should Fix: Explicitly specify archiving and DVR window options

The most important options to configure explicitly are related to the archive that Origin keeps on disk and the window of the stream's timeline that it makes accessible through the client manifests that it generates. The longer the archive, the further back in time Origin's Restart and Catch-up TV options can reach. The longer the DVR window, the further back a viewer can rewind the stream. When setting these options, the following should be kept in mind:

Archiving is a legacy switch and should always be enabled (

archiving=true)The

archive_lengthshould be a multiple ofarchive_segment_lengthThe

dvr_window_lengthmust be shorter than thearchive_length

The exact configuration will depend on your use case, but the archive length should not exceed 7 days and the DVR window length should not exceed several hours. Regarding the DVR window, take into consideration that:

Having a DVR window is required because a player must be able to download a few media segments to use as buffer (e.g., the DVR window must be at least three times the length of your HLS media segments for your stream to be compliant with the HLS specification.

The longer the DVR window the longer HLS Media Playlists will be, with the same being true for Smooth and DASH client manifests in many cases as well (depending how exactly the timelines are represented in these cases).

The longer the DVR window the more content you will to keep cached on your CDN in order to guarantee efficient delivery at scale.

A DVR window of a considerable size will impact the time it takes for Origin to generate the client manifests for each output format.

Having said that, the following table may offer some guidance

(archive_length, archive_segment_length and dvr_window_length are

all configured in seconds):

Use cases / configuration |

Pure live |

Archiving, no 'DVR' |

Archiving and 'DVR' |

|---|---|---|---|

archiving |

true |

true |

true |

archive_length |

120 |

86400 (24h) |

86400 (24h) |

archive_segment_length |

60 |

600 (10m) |

600 (10m) |

dvr_window_length |

30 |

30 |

3600 (1h) |

Note

To offer your viewers the possibility to record and rewatch content using an efficient and reliable solution, we recommend Unified Remix - nPVR.

Must Fix: Enable --restart_on_encoder_reconnect

Using the --restart_on_encoder_reconnect is highly recommended because it

allows an encoder to reconnect and continue pushing a stream after the stream

was accidentally switched to a 'stopped' state.

This setting forces the pubpoint to continue as though the end-of-stream was never sent by the encoder. The encoder configuration must not change between reconnects; the stream is expected to be a continuation of the media previously ingested.

Notice that while --restart_on_encoder_reconnect improves robustness, we

recommend relying on this leniency only for recovering from accidents.

Interruption and continuation of a live presentation will have observable

artifacts.

Verify that publishing point is ready for ingest

To verify that a publishing point is ready to ingest from a Live encoder you can make a GET request to:

http(s)://example/path/<channel-name>.isml/state

The response should indicate the 'state' of the publishing to be 'idle'.

Verify that publishing point has successfully started ingest

To verify that ingest was started successfully you can make a GET request to the same 'state' endpoint:

http(s)://example/path/<channel-name>.isml/state

Although the response should now indicate 'started'

If the publishing point has successfully started ingest, the Live server

manifest will now list all the stream's tracks, a database file (db3) will have

been been created, and Origin will have started storing the ingested media in

one or more .ismv files.

More detailed information about individual tracks can be obtained through a GET request to the 'statistics' and 'archive' endpoints:

http(s)://example/path/<channel-name>.isml/statistics

http(s)://example/path/<channel-name>.isml/archive

Note

A 'started' state only indicates that ingest started at some point. It does not indicate that a stream is currently being ingested. Therefore, it is not a good means to check the health of your publishing point, or Origin in general.

Should Fix: add Origin and encoder redundancy (using ReaP)

Using two encoders will ensure that ingest continues even if one of the encoders becomes unavailable. This can be combined with a dual Origin setup where the encoders cross-post: a 2x2 setup. Both Origin and encoder will have maximum resilience:

origin1 origin2

| \ / |

| / \ |

encoder1 encoder2

Note

General guidelines for failover of live encoders and origins are defined in Interface 1 of the DASH-IF Live Media Ingest specification.

Note

The ISO/IEC 23009-9:2025 reference architecture uses a Dual encoder setup (failover) as outlined on this page. For further details see Redundant Encoding and Packaging for Segmented Live Media (ReaP).

Dual encoder setup (failover)

To let two encoders successfully push the same livestream to a publishing point on Origin (or, in a fully resilient setup, cross-post to two Origins), both encoders should be time-aligned. They should be configured to output segments of the same, constant length, too. This will ensure that:

Both encoders insert the same timestamps into the segments they are pushing

Segments can be aligned across both encoders (i.e., have the same length and start at the same time).

With the same content being pushed from two sources, Origin will simply ingest whichever segment arrives first, and discard the second one.

This setup can be achieved by making sure the two encoders share the exact same time source for their output's timestamps. One way to guarantee this is to have both encoders pass on the timestamps of the shared source they rely on for the livestream, if the timing information in that source is reliable and makes sense (e.g., is Unix Epoch based).

To make sure the output of both encoders is not only time aligned but aligned across segment boundaries as well, it is important to have the encoders start (and restart) at exact multiples of the configured segment length, counting from a well-defined point in the past (e.g., Unix Epoch). This is described in a little more detail below.

Perfectly align start of two encoders

Assume that it is 20:00 on October 6th of 2020, UTC time, and your have configured your encoders to output segments of 1.92 seconds. If you convert the current time to a timestamp of seconds since Epoch you will get 1602014400, and it turns out that is exactly 834382500 x 1.92. In other words, if one of your encoders had just dropped out and needed to rejoin and start pushing the livestream again, it should do so at the next multiple at 20:00:01.920, or any other multiple after that (e.g. 20:00:03.840, 20:00:05.760, …).

Or, as a rudimentary calculation using standard Bash command-line tools to calculate the offset in nanoseconds:

#!/bin/bash

timescale=1000000000

# 1.92s in 10e9 timescale

segment_length=$((timescale * 48 / 25))

# nanoseconds since 1970

time_since_epoch=`date --utc +%s%N`

# ceil(time_since_epoch / segment_length)

next_segment=$(((time_since_epoch + segment_length - 1) / segment_length))

next_offset=$((next_segment * segment_length))

echo "next offset in nanoseconds: $next_offset"

Ingest one livestream on two Origins (failover)

If your encoder supports it you can push the same livestream to two Origins for redundancy purposes. This is commonly referred to as dual ingest.

When creating a duplicate Origin setup it is important that the options for both publishing points are identical, so that a client can seamlessly switch between the output of both Origins because it will be identical.

When you do not start pushing the livestream to both Origins at the exact same time, it is possible to synchronize the part of the Live archive that the second Origin is missing with that of the first.

Synchronizing archive from one Origin with another

Since the Live archive that Origin keeps on disk is already in a format that Origin can ingest, and because Origin handles duplicate segments without issue (by simply ignoring any that have already been ingested), it is possible to simply POST the archived files from the first Origin to the second Origin.

For example with cURL:

#!/bin/bash

curl --data-binary @/var/www/chan1/chan1-33.ismv \

-X POST "http://origin2/chan1/chan1.isml/Streams(chan1)"

curl --data-binary @/var/www/chan1/chan1-34.ismv \

-X POST "http://origin2/chan1/chan1.isml/Streams(chan1)"

curl --data-binary @/var/www/chan1/chan1-35.ismv \

-X POST "http://origin2/chan1/chan1.isml/Streams(chan1)"

To make dual ingest work in the best possible way, let the encoder start pushing

to the second Origin at the exact same time as the first, or have it start at a

multiple of the configured archive_segment_length (counting from when it

start pushing to the first Origin).

This approach is similar to 'dual-encoder-alignment' and will ensure that the start and end times of all archive segments that both Origins write to disk will be perfectly aligned (although this alignment is not as crucial as with a dual encoder setup, and may be ignored).

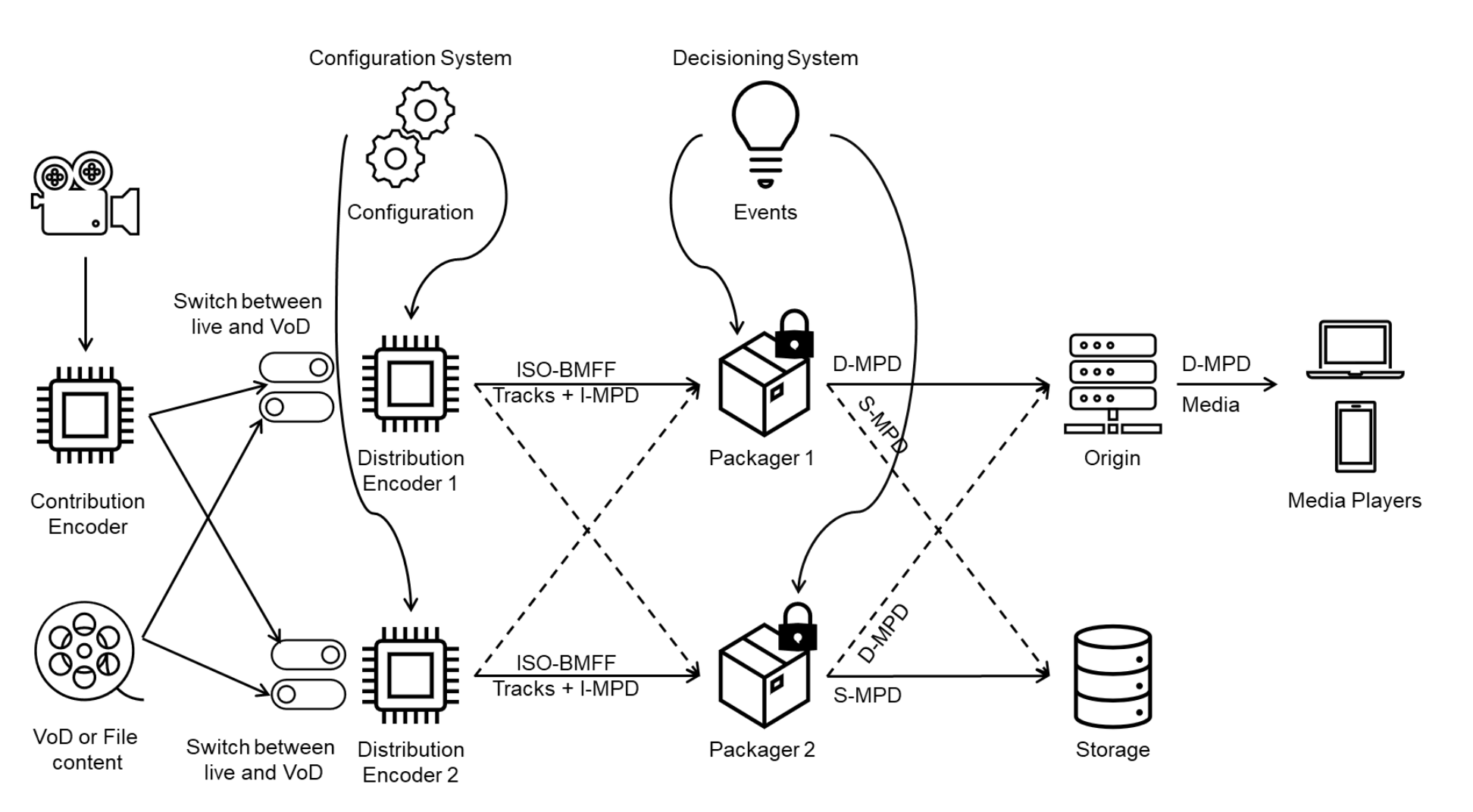

Redundant Encoding and Packaging for Segmented Live Media (ReaP)

Schematically

ISO/IEC 23009-9:2025 specifies a reference architecture for redundant encoding and packaging of segmented live media (depicted above). In addition, it specifies format constraints to enable encoding and packaging of interchangeable media segments and media presentation descriptions. This document also specifies formats for 24x7 live recording and archiving of segmented live media.

The standard includes profiling the Dynamic Adaptive Streaming over HTTP (DASH) Media Presentation Description for ingest, storage and redundant packaging applications. Further, a Common Media Application Format (CMAF) segment and track format is defined to support redundant encoding and packaging using a common timeline relative to the Unix epoch, an approach discussed above in Perfectly align start of two encoders and in the EpochSegmentSync paper.

The ReaP blog post article provides a little background on the history or ReaP and how it helps building redundancy into a streaming workflow to ensure continuity of presentation in case a source fails. ReaP is also about storing presentations for viewing later, using a similar approach to VOD.

Must Fix: Ensure connection between Live encoder and Origin is secure

A Live encoder and Origin might not always be part of the same network, safely shielded from the open internet. A practical example would an on-premise encoder posting a live stream to Origin running in the cloud. In such cases, it becomes more important to ensure the connection between the encoder and Origin is secure.

Ideally, Origin would not be connected to the open internet at all. Therefore, the recommendation in such cases would be to work with a VPN that you let the encoder connect to. If this is not a possibility a combination of (Origin) authentication and IP restrictions and ssl is a suggested approach.

Should Fix: Disable anti-virus/malware scanning on publishing points and database files

Directories containing publishing points and database files should be excluded from anti-virus/malware scans. Active scanning can cause file locking or corruption causing degraded performance.

If you're using the --database_path option to store database files in a custom location, ensure that directory is also excluded from scanning.

CMAF ingest test streams

Example streams utilizing the CMAF Ingest specification can be found at:

Learn more

Check our tutorial on Getting Started with Live.

Read our Unified Origin - LIVE documentation.